Diego Castro Estrada

I'm a graduate in both Computer Science and Mathematics from Florida International University. I'm interested in exploring ways to make machine learning more robust and interpretable, especially in the context of natural language. I plan on approaching these questions from a mathematical point of view by leveraging tools from optimization and geometry.

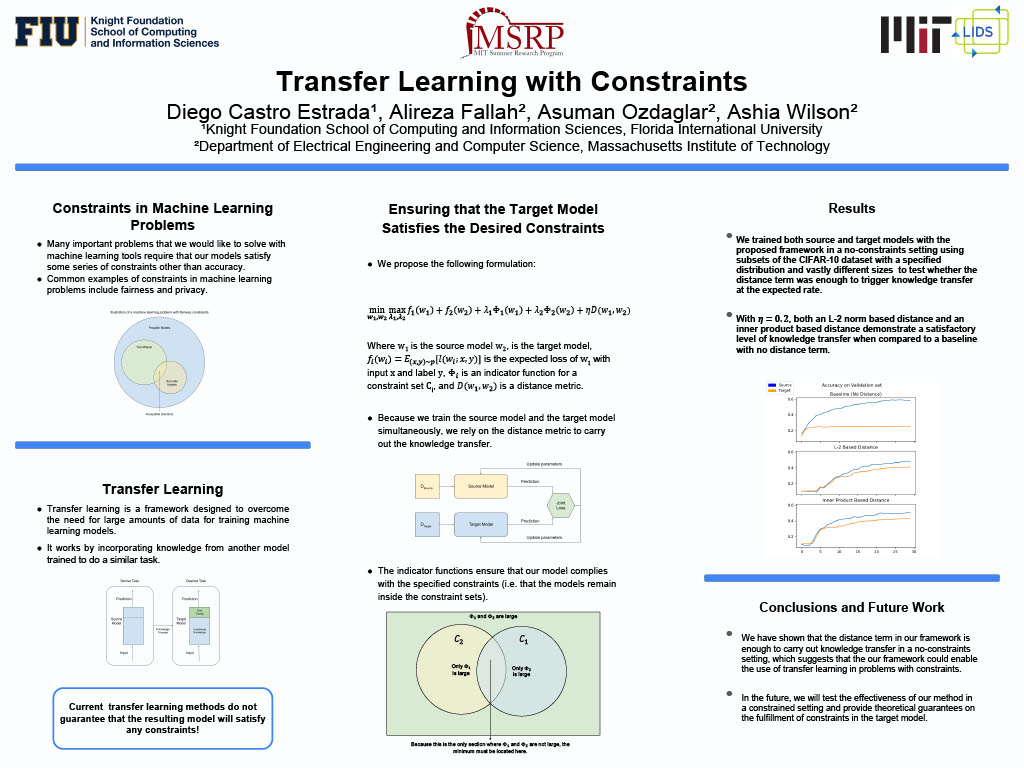

Presented work done at MIT Summer Research Program (MSRP) under the supervision of Drs. Asuman Ozdaglar and Alireza Fallah. We worked on the development of a framework for transfer learning that gives guarantees on the preservation of desirable properties from the source model. For instance, given a differentially private model, we try to give conditions under which a transferred model would also be differentially private. Our proposed method tunes the source model and the target model simultaneously, minimizing a joint cost function with a distance term and a number of indicator functions for constraint sets.

Presented work done at FIU's Applied Mathematics Research Program for Undergraduates (AMRPU)

in collaboration with Grayson Light. Our project explored the properties of a novel type of Riemannian

Submersion from an almost-product Riemannian manifold into a Riemannian manifold called

Pointwise Bi-Slant Riemannian Submersions (PBSRSs). We discovered several novel ways to

factor the PBSRSs into vertical and horizontal components. We also outline the conditions under which

such distributions are totally-geodesic, integrable, and pluriharmonic.

[report available here]

Presented work done during Capstone I (part 1 of the senior project class at FIU). Worked with a team to develop a Fourier-Motzkin based system to evaluate the validity of systems of inequalities involving information-theoretic quantities (entropy, mutual information, cross-entropy, etc...). System leverages knowledge of well-known information theory results to enhance its efficacy.